evals

Master Agentic AI.

Ship with confidence.

A complete evals framework for agentic systems. Measure what matters, automate with confidence, govern at scale, and continuously improve.

7

I.O.R.M.G.O.D Layers

4

M.A.G.I. Pillars

16

Eval Metrics

Metrics

QAG, G-Eval, Contextual Precision & Recall, Tool Correctness. ≤5 metrics per use case. Signal over noise.

Automation

Evals run in CI/CD. Offline and online scoring. No manual spreadsheet review between you and a deploy.

Governance

Metric ownership, quarterly reviews, golden-dataset versioning, clear thresholds with escalation paths.

Improvement

Full-funnel tracing. Threshold tuning. Open and axial coding on real failures, creating a feedback loop that compounds.

Data you can trust

Evaluate what actually drives outcomes.

Ship faster

Automate evals in your workflow.

Govern with clarity

Own quality with clear standards.

Improve continuously

Turn insights into compounding gains.

“Evals are not QA. They're the control plane that keeps production LLM systems correct, governable, and shippable.”

The thesis behind EvalMaster

Why This Exists

LLMs fail in ways traditional software testing can't see.

A chatbot that answers “Yes, you can expense a coffee machine” when the exclusion policy says otherwise. An agent that calls a tool that doesn't exist. A RAG pipeline that retrieves last year's policy and gives confidently wrong answers. These aren't bugs you find with unit tests — they're failure modes that emerge from how LLMs interact with real data, real tools, and real users.

Evals are the discipline of catching these failures before production. You read traces, name the failure modes, build automated checks, and run them as quality gates in CI/CD. EvalMaster gives you the complete playbook: M.A.G.I. to operationalize it, I.O.R.M.G.O.D for where it lives in your stack.

Read the full evals guideBefore

Manual evaluation: slow, subjective, never at scale

Failures discovered by users, not CI gates

Zero visibility into hallucinations

No ownership of eval responsibilities

After M.A.G.I.

Automated judges run on every PR in <5 min

Quality gates block bad deploys before shipping

Full traces: cost, latency, quality per query

Clear metric ownership + quarterly reviews

Capabilities

Built for How LLMs Actually Fail

Failure Taxonomy

Read traces, name failure modes, build automated checks against what actually breaks.

CI/CD Gates

QAG, G-Eval, Contextual Precision with binary pass/fail thresholds in your pipeline.

Observability

Traces, spans, cost-per-query, quality A/B — all out of the box with Langfuse.

Improvement Loop

Threshold tuning, golden-dataset versioning, quarterly reviews. Evals that compound.

Builder's Playbook

7-Phase Framework

From problem definition to production

Explore →The Framework

M.A.G.I.

Four pillars that turn “we should do evals” into a program people can actually run

Metrics

QAG, G-Eval, Contextual Precision & Recall, Tool Correctness. ≤5 metrics per use case.

Automation

Evals run in CI/CD. Offline and online scoring. No manual spreadsheet review.

Governance

Metric ownership, quarterly reviews, golden-dataset versioning, clear thresholds.

Improvement

Full-funnel tracing. Threshold tuning. A feedback loop that compounds.

For Builders

The Reading List

What evals actually are

The 5-step loop: traces → failure notes → failure modes → automated checks → CI/prod. Complete failure taxonomy included.

Read the guideLangChain → LangGraph

When to orchestrate vs. chain.

8 Architecture Patterns

Simple RAG to enterprise multi-agent.

Agent Tool Hallucination

Schema validation + circuit breakers.

RAG Context Failures

Partial evidence, dangerous answers.

Architecture

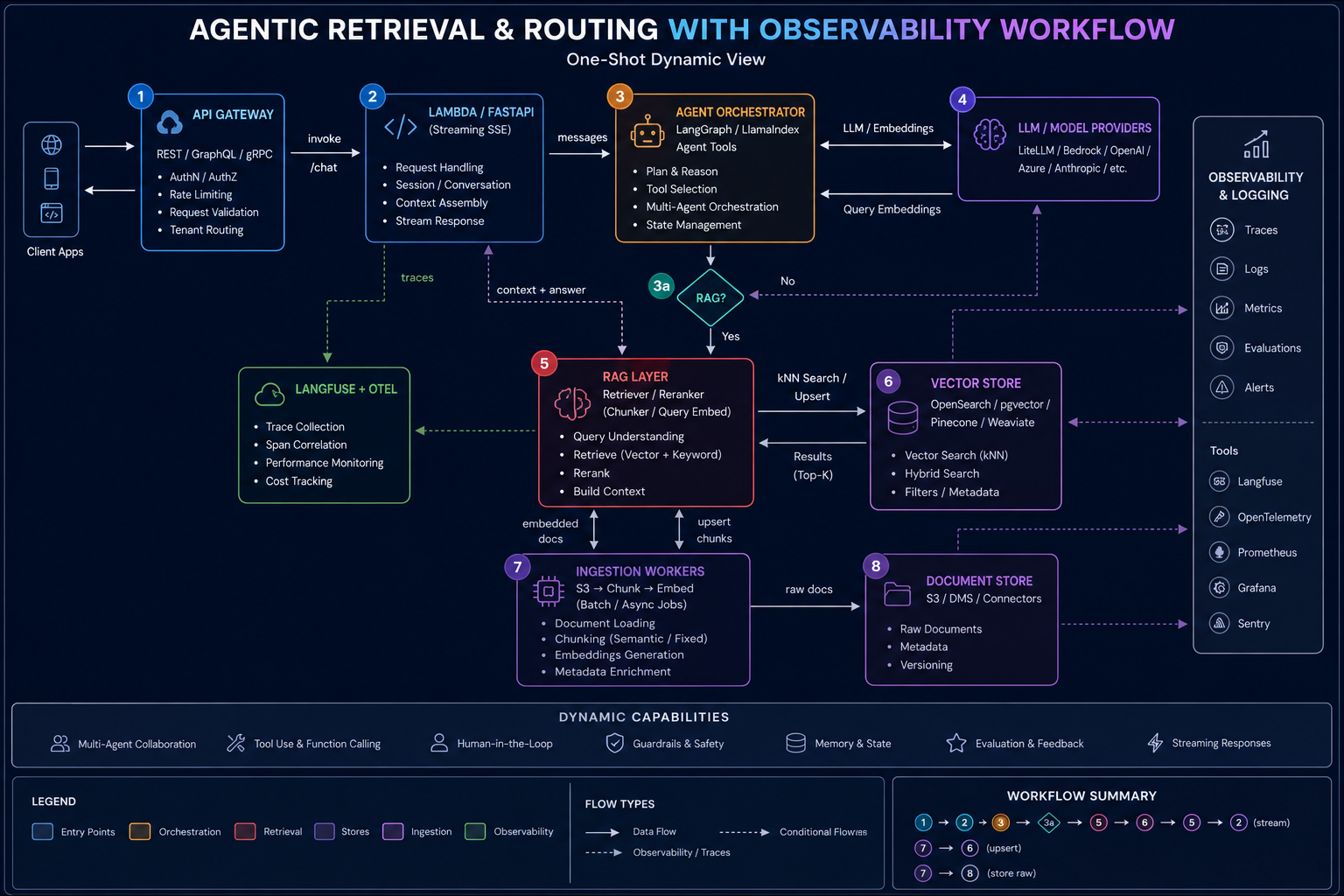

I.O.R.M.G.O.D

Interface & Gateway

Orchestrator / Agent

Retrieval (RAG)

Models

Guardrails

Observability & Eval

load-bearingData & Governance

Getting Started

Get Started in 3 Steps

Name Your Failure Modes

Read 40–100 traces. Group failures into 4–7 categories. This is your failure taxonomy.

30 minBuild Automated Judges

Create deterministic checks or LLM-as-judge evaluators. Set binary pass/fail thresholds. Wire into CI.

1–2 hrsMonitor & Improve

Run evals in production with Langfuse. Sample failures for review. Tune thresholds quarterly.

OngoingReady to Build Your Eval Program?

12-week cohort: failure taxonomy, judge calibration, CI gates, production monitoring, cost optimization.

Agentic AI, Ready for Reality.

Build, evaluate, and improve agentic systems that perform in the real world. From failure taxonomy to production monitoring.

Explore the frameworkStructured Learning

Fundamentals to production.

Hands-on Practice

Templates and frameworks.

Expert Insights

Practitioners in the wild.

Lifetime Access

Always up-to-date.